TL;DR: Many organizations misinterpret metrics, overreact to noise, and miss real signals. Using an A3 approach helps leaders align on the problem first–before introducing Process Behavior Charts as a countermeasure for better decisions and more effective improvement.

Why Process Behavior Charts Need Better Framing

I was invited to give a presentation on Process Behavior Charts and Measures of Success at Cleveland Clinic last week as part of their Quality Forum series. I always enjoy visiting with them, and I walk away very inspired by what they are doing with continuous improvement and daily tiered huddles.

See a previous blog post of mine and a video they have shared about the huddles:

I got to observe many huddles last Thursday, and I will be blogging about that soon. I saw or met many of the people you see in the video.

Applying A3 Thinking to Metrics and Performance

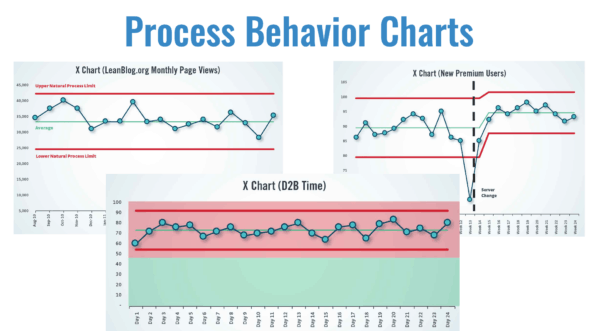

In my presentation, I started by showing a few examples of what Process Behavior Charts (PBCs) might look like.

But, I called “time out” on myself and said:

“Let's not jump to solutions!”

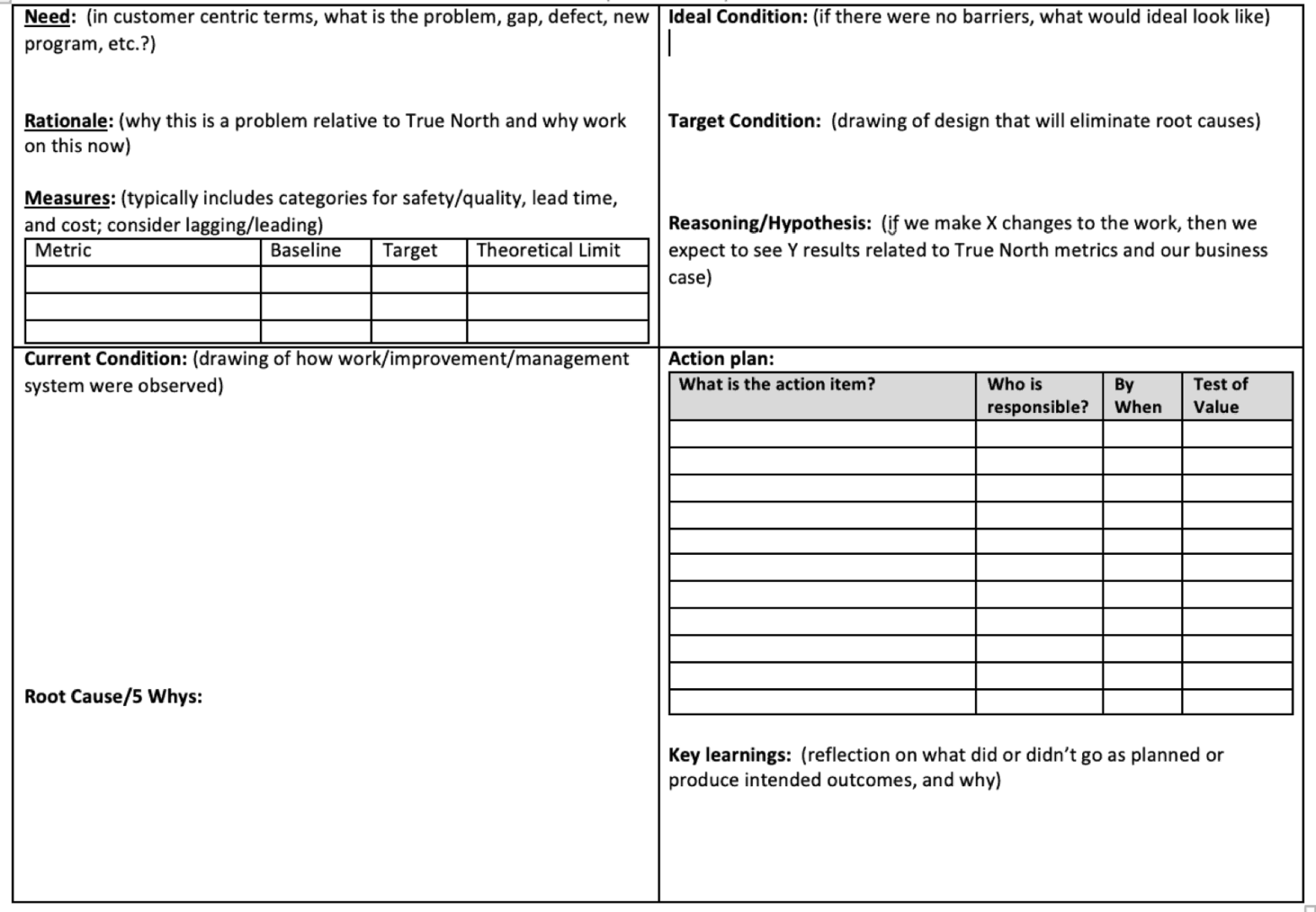

Now, it's not great to go through an A3 with the intent of working toward a pre-ordained solution… so forgive me for that. But, I think it's important to talk about the NEED for solutions and the A3 thought process can help us gain alignment. So, it might have gone something like what follows.

This was the first time I presented things in a pseudo-A3 format. I did so because the Cleveland Clinic has used A3s for problem-solving in many parts of the organization.

First, below you'll find an A3 template that we use at the firm Value Capture (where I'm a subcontractor with a client right now). There are different A3 templates out there (and I've used different ones), but the exact format and wording

Defining the Need: Why Current Metrics Fail Leaders

What's the need? What's the problem or gap that we face?

From my perspective, some need statements might include:

- Incorrect conclusions in evaluating:

- Changes in performance metrics

- Impact of improvement efforts

- Wasting time (explaining routine fluctuations)

- Missing signals of a system change

Rationale: Why This Problem Matters

If the need statements are the “what,” then the rationale gets into the “why.” Why does this problem matter? Why is it worth talking about?

Some rationale statements might include:

- Overreactions exhaust people

- Faulty conclusions hamper improvement

- Unfair performance evaluation hurts morale

Does the group we're working with agree with the Need and Rationale? Is there agreement that there's a problem? Is there alignment around these being important problems to solve?

I could probably provide some backing statements or examples for my assertions about Need and Rationale

Metrics That Reveal Waste, Not Just Results

Can we measure the current state? Can we figure out how to measure our impact so we aren't just guessing that we have an improvement? Can we know there is improvement instead of feeling like it's better?

Here's what I proposed as metrics, even if these things aren't necessarily easy to measure:

| Metric | Baseline | Target | Theoretical Limit |

| Time spent investigating root causes that don't exist | X hours a week | 0 hours | 0 hours |

| Leader turnover | Y leaders a year | 50% less | 80% less |

| Staff satisfaction | Z % | 50% better | 95% |

When we're asking for a “root cause” for “common cause variation” in a system, that's a waste of time, as I wrote about in this case study:

Current Condition: How Metrics Are Commonly Misused

Here, I shared examples of common practices that aren't really helpful in answering these three important questions:

- Are we achieving our target or goal?

- Are we improving?

- How do we improve?

Practices that don't help much

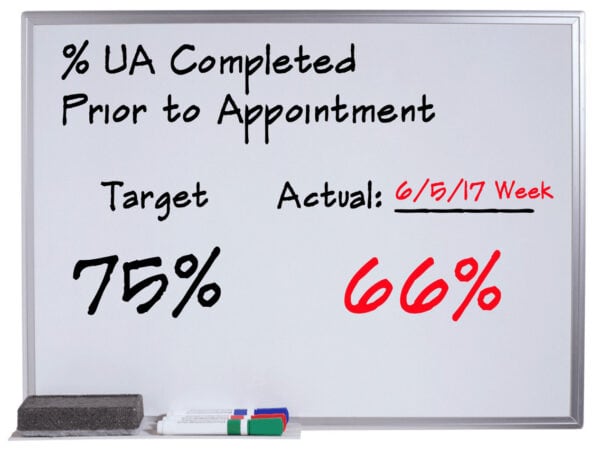

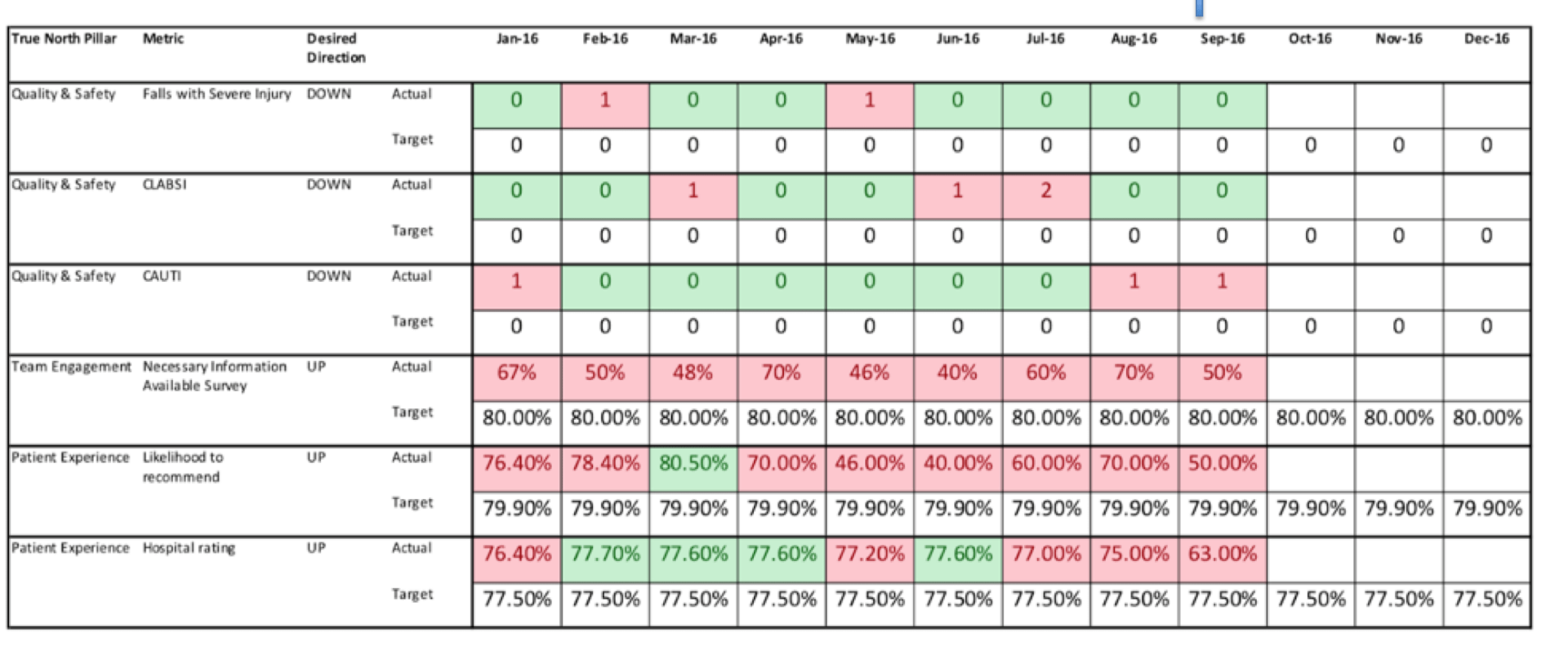

Comparing a single data point to a target:

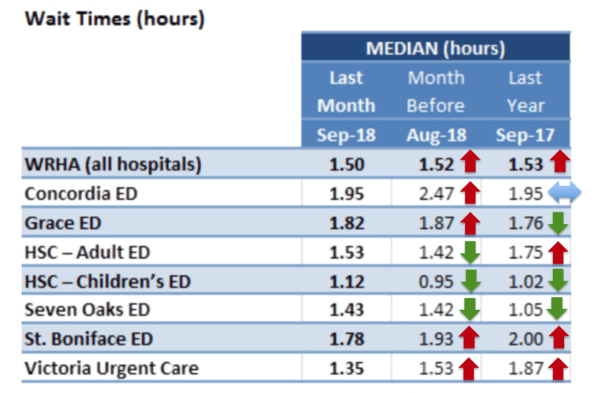

Comparing a single data point to one or two previous data points (with or without color-coded arrows):

“Bowling charts”:

If all we're doing is “barking at the red,” does that require much skill? Does that really help? I showed this cartoon, which always gets a laugh, even if it stings a bit:

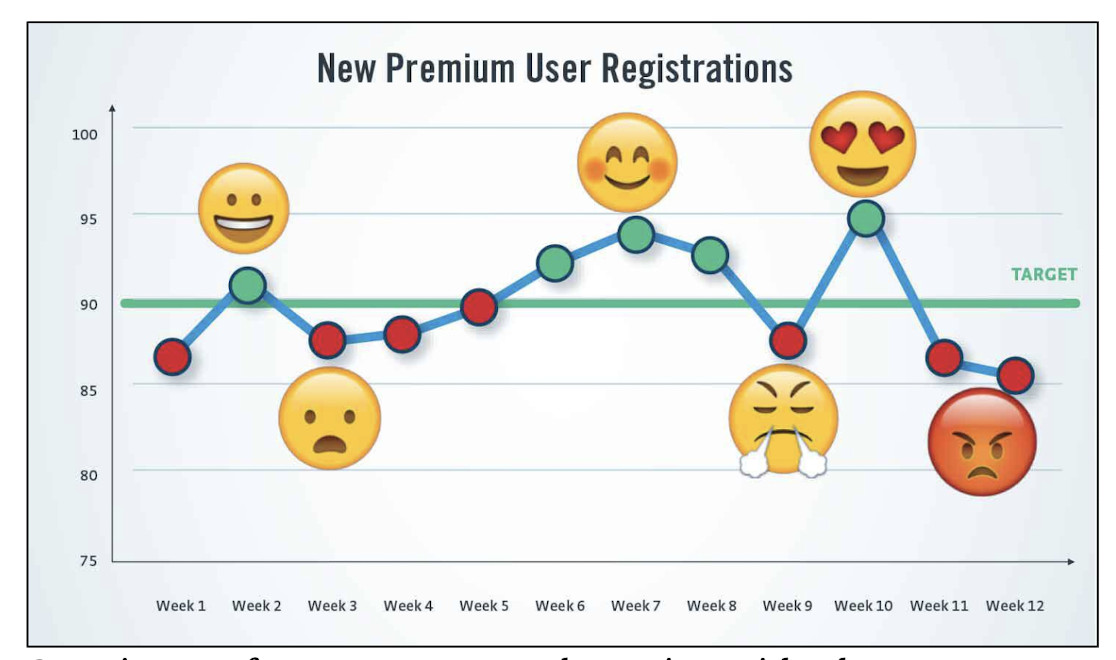

Even run charts aren't super helpful if we're overreacting to every up and down in the metric, as in my “management by emoji” example:

We have to agree that the Current Condition is lacking in some way before it's worth the effort to talk about solutions.

I've long said that:

- Process Behavior Charts are a solution that many don't know about

- Process Behavior Charts are a solution to a problem that many don't recognize as a problem

Causal Analysis: How Bad Metric Habits Persist

Why is our current state the way it is? Why do we have gaps? I proposed:

- “This is how I was taught to do metrics.”

- “This is just how we do metrics here.”

- “Our Lean consultant showed us to do it this way two years ago.”

In summary, a lack of exposure to Process Behavior Charts?

We can't blame people for not knowing something they haven't been taught. Most people haven't ever been taught how to apply “control charts” or “statistical process control” to metrics. PBCs are a form of “control chart,” although I don't like using that word for a number of reasons.

Target Condition: Using Process Behavior Charts as a Countermeasure

Our target condition should include methods that allow us to answer those three questions. The countermeasure I propose is PBCs.

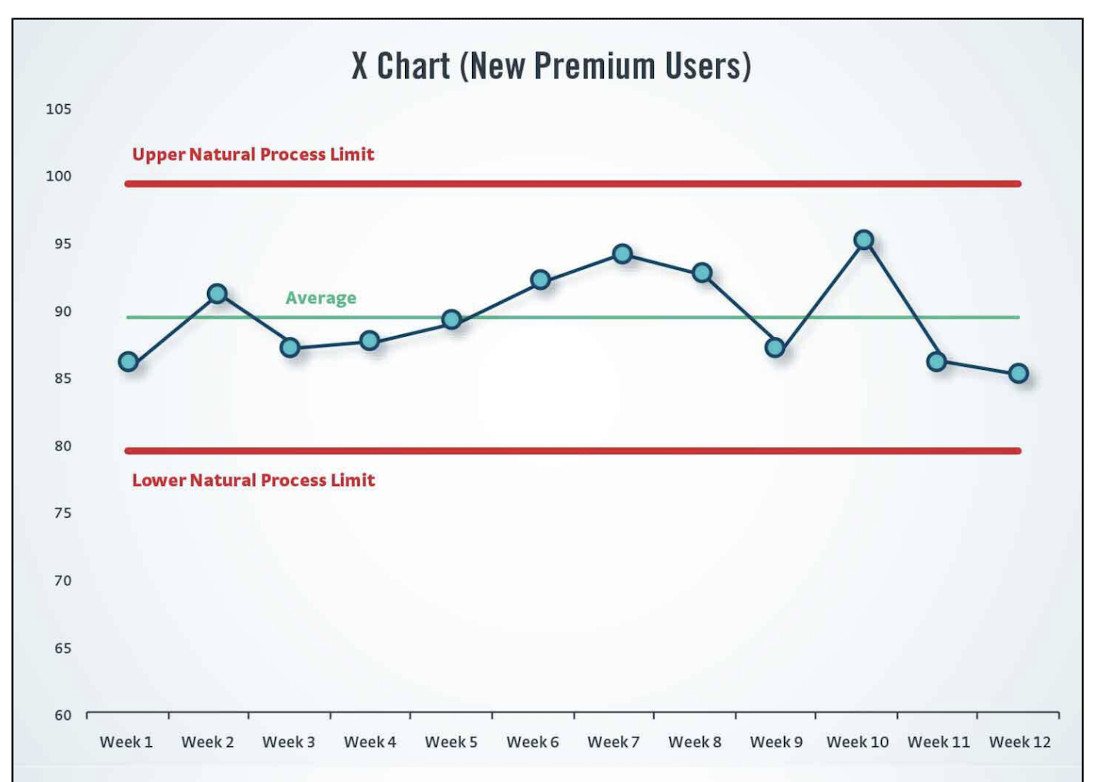

Here's a PBC for that “management by emoji” example:

We would do better if we stopped reacting to noise. Don't ask people to explain noise. Don't ask for root causes of data points that are noise.

Later in the day, we had a couple of good discussions about PBCs. There were questions about:

“What do we want leaders to do?”

One answer might be to react appropriately to signals, when we see them.

I proposed that another important question is:

“What do we want leaders to STOP doing?”

We want them to stop overreacting to noise. That's a tough habit to break.

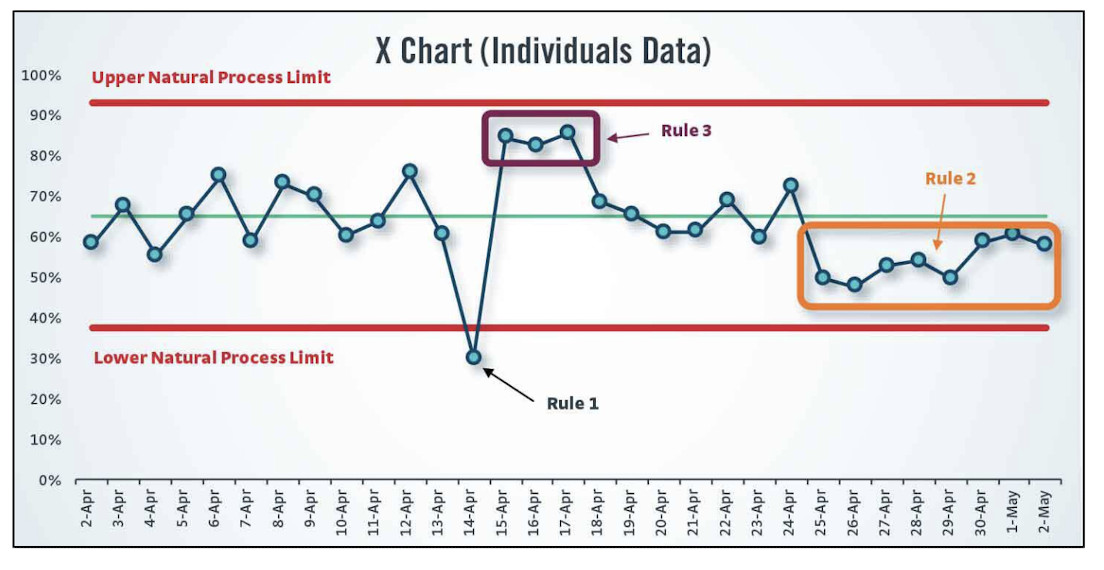

Here's an example of a PBC with three signal types. THOSE are the times when we should ask, “What happened?”

The three main rules for finding signals are, as illustrated above:

- Rule 1: Any single data point outside of the limits

- Rule 2: Eight consecutive points on the same side of the average

- Rule 3: 3 out of 3 (or 3 out of 4) points that are closer to the same limit than they are to the average

I shared some examples of PBCs and how they're used to:

- Evaluate an improvement effort to help see if it's really made a difference

- See if there's an unexplained signal in a metric that's worth investigating

The point is not to just create PBCs. The point is to use PBCs to help us get better at getting better — improving the way we evaluate improvements.

Hypothesis: React Less, Lead Better, Improve More

Here's the hypothesis I would pose:

“If we react less (only to signals), we can lead better, and we'll have time to improve more”

Do you agree with Process Behavior Charts as a countermeasure? Do you first agree that we have a problem? Do you agree with my hypothesis? Have you been able to test it in a meaningful way?

If we have alignment, we can move forward to “Action Plans” and then review our Hypothesis to identify “Key Learnings.”

That's a rough “A3” structure for looking at Process Behavior Charts. How would your A3 for this be different?

Thanks again to the folks at Cleveland Clinic for the thought-provoking two days together (we also did my workshop together on Friday).

An A3 doesn't exist to justify a favorite solution–it exists to build shared understanding of the problem. When leaders agree that current metrics create waste, frustration, and false conclusions, Process Behavior Charts stop sounding like “extra analysis” and become a practical leadership tool. The real win isn't better charts–it's better thinking about performance.

Executive Summary: Using A3 Thinking to Improve How Leaders Use Metrics

Purpose

Many organizations struggle with metrics that drive overreaction, wasted time, and poor decisions. This executive summary outlines how A3 thinking can help leaders recognize this problem and align on Process Behavior Charts (PBCs) as a practical countermeasure.

The Problem

Leaders routinely use metrics in ways that make it hard to answer three essential questions:

- Are we achieving our goals?

- Are we improving?

- How should we respond?

Common practices–red/green dashboards, month-over-month comparisons, bowling charts, and reaction to single data points–often lead to:

- Overreaction to routine variation

- Missed signals of real system change

- Time wasted explaining noise

- Unfair performance evaluation of people and teams

These issues are usually treated as “normal management,” not as problems to be solved.

Why This Matters

Misusing metrics has real consequences:

- Leaders and teams spend time investigating problems that don't exist

- Improvement efforts are evaluated incorrectly

- Morale suffers when people are blamed for normal variation

- True opportunities for learning and improvement are delayed or missed

This is not a people problem. It is a system design and thinking problem.

Current State

In many organizations:

- Metrics are reviewed without context or history

- Leaders feel pressure to “explain every red”

- Root cause analysis is demanded for common-cause variation

- Improvement conversations are driven by emotion rather than evidence

These behaviors persist largely because leaders were never taught a better way.

Root Cause

Most leaders have not been exposed to:

- Statistical thinking about variation

- Practical use of control charts for management

- A clear framework for distinguishing signal from noise

As a result, metric misuse becomes normalized.

Target Condition

Leaders use metrics in a way that:

- Distinguishes noise from meaningful change

- Encourages calm, evidence-based responses

- Focuses improvement efforts on systems, not individuals

- Reduces unnecessary investigation and escalation

Countermeasure

Process Behavior Charts, introduced through A3 thinking, provide a structured way to:

- Align on the problem before jumping to solutions

- Define when action is warranted–and when it is not

- Support better leadership decisions with less reactivity

PBCs are not about creating more charts. They are about changing how leaders think and respond.

Hypothesis

If leaders:

- React only to statistically meaningful signals

- Stop demanding explanations for noise

Then they will:

- Lead more effectively

- Reduce wasted effort

- Create space for meaningful, systemic improvement

In short: React less. Lead better. Improve more.

Key Takeaway for Executives

Process Behavior Charts succeed not because they are statistical, but because they support better leadership behavior. Using an A3 to frame the need builds shared understanding, reduces resistance, and positions PBCs as a leadership enabler–not a technical add-on.

Did .you intentionally not explain Rules 1, 2&3 in the Noise Chart example? I was left hanging.

Hi – Thanks for reading. I illustrated the three main rules in a chart lower down, but I also added the text explaining those rules:

* Rule 1: Any single data point outside of the limits

* Rule 2: Eight consecutive points on the same side of the average

* Rule 3: 3 out of 3 (or 3 out of 4) points that are closer to the same limit than they are to the average

To my hypothesis, here is one reader story that was shared in an Amazon.ca review:

Hi Mark – thank you for joining us, and I do appreciate the A3 thinking. A big part of why we are headed down this path is actually related to strategy execution. If we want to ensure teams can focus on the most important items and attack them with good A3 thinking then we need a way to have confidence that the other metrics are just moving around noise and get a clear signal when something that has changed. A reality is we all have lots of metrics we are responsible for, that will not change, nor should it. We need a clearer way to indicate when we should take action and when we shouldn’t.

Thanks, and thanks, Nate. Your comment is a good summary of the mindset.

I’d add that when a metric is predictable (just fluctuating, or noise) but it’s not capable (not anywhere near consistently hitting the goal), then we can “take action” but the action should be a systematic analysis and improvement method (like A3) instead of just being reactive. Look at a system instead of looking at a data point.

Great post. Keep em coming. Is there a rule of thumb as to the correct interval these metrics should be kept (ie – hourly, daily, weekly, monthly, quarterly, yearly)? It seems what may be a signal at one level would be simply noise at another.

Hi Jeff – Thanks and great question.

If the data is basically free and it’s easily available, I’d always rather do a daily chart than a weekly chart… a weekly chart is better than a monthly chart.

Two reasons:

1) You’ll see signals and shifts more quickly with a faster cycle / shorter interval chart. The limits are calculated in a way that helps you not overreact to, say, a daily chart that’s bound to have more point-to-point variation than a weekly or monthly chart would. The limits will be wider when you have more point-to-point variation.

2) Sometimes a signal gets “lost” when it’s averaged out into a weekly or monthly metric. A weekly signal might be lost when averaged out into a monthly metric.

Again, if the cost of collecting data daily is outrageous, or if the metric HAS to be monthly for some outside reason (regulatory, etc.) then the best you can do, frequency wise, is the best you can do…