TL;DR:

Single webinar numbers and year-over-year averages often distort reality. By using run charts and Process Behavior Charts, you can separate signal from noise, avoid overreaction, and make better decisions about growth, capacity, and investment.

I manage KaiNexus's webinar program, which means I see the numbers people naturally gravitate toward: registrations, attendance, and year-over-year comparisons. Those numbers are easy to report, easy to celebrate, and easy to misunderstand.

In 2025, that problem is more common than ever. Dashboards update instantly. Comparisons are automatic. And it's tempting to draw conclusions from whatever number happens to be newest, highest, or lowest.

But webinar metrics–like most business metrics–are outputs of a system. Looking at them one data point at a time, or comparing one period to another, often creates more confidence than insight.

Our goal with webinars isn't popularity for its own sake. It's learning, community, and usefulness–for existing KaiNexus customers and for people who are still getting to know us. That means the right question isn't “Was this webinar up or down from last month?” It's “What does the system actually tell us over time?”

This post walks through why single numbers, monthly comparisons, and even annual averages can mislead–and how run charts and Process Behavior Charts provide a clearer, calmer, and more actionable way to evaluate webinar success without overreacting.

Why Single Webinar Metrics Are So Easy to Misuse

Keep in mind, I don't have any specific target for webinar registrations, and there is no sort of incentive compensation. That removes any incentive for me to artificially boost the numbers in some way that wouldn't help the company. I'm not tempted to do webinars on popular, but off-topic, topics — is there such a thing as a “clickbait webinar”? I'm certainly not tempted to go online and “buy” attendees (which is probably something that could be done, just as people, unfortunately, buy social media “likes” or positive reviews on Amazon).

The mission is to provide good content and to build community. We want to help our existing customers and provide a good experience for those who are considering becoming customers. Our director of marketing is also looking to get “new contacts” through the webinar promotion — and that's a contributor to the marketing and sales pipeline that could lead to more “qualified leads” and, eventually, more customers.

When ‘Good' and ‘Bad' Numbers Both Miss the Point

People often report single numbers in a way that makes things look good.

For example, regarding the November webinar, I could report:

“We had 575 registrations, the second highest in our history.”

If I were angling for an end-of-year performance review, I'd trumpet that number and that fact. Numbers don't lie… but a single number misses context.

As I mentioned, I presented a webinar the other day, and I could brag that the 498 registrations were the third highest in our history. But, if somebody were trying to make the situation look worse, they could factually report:

“Registrations for December were down 13.4% from the previous month.”

That would be factually correct, but we're again missing context. Would that decline mean a negative impact on the annual review (a review that, again, I don't formally get)? Would recency bias lead a manager to say, “Well, your performance trailed off a bit, so you don't get the top evaluation or pay increase this year”?

Why Averages and Year-Over-Year Comparisons Still Mislead

Organizations often compare annual averages against each other. If one data point (or two) doesn't tell us much, comparing two averages usually doesn't help us much either.

I could report:

“Average webinar registrations in 2019 (332) were up 25% from 2018 (264 per webinar).”

Again, that's factually correct, but what does that tell us? What does that say about my performance as a webinar leader? Does that mean I've chosen better speakers and more attractive topics? But what about quality? Does “more attractive” mean “better” or “more helpful”?

Maybe registrations are up because the KaiNexus user base is higher than it was before, as the company grows and adds new customers. More users means more people to promote the webinars to. If I measured something like “the number of webinar registrations as a percentage of KaiNexus users,” maybe the webinars are relatively less popular? Would I expect the percentage of people who are interested enough to register to be consistent over time?

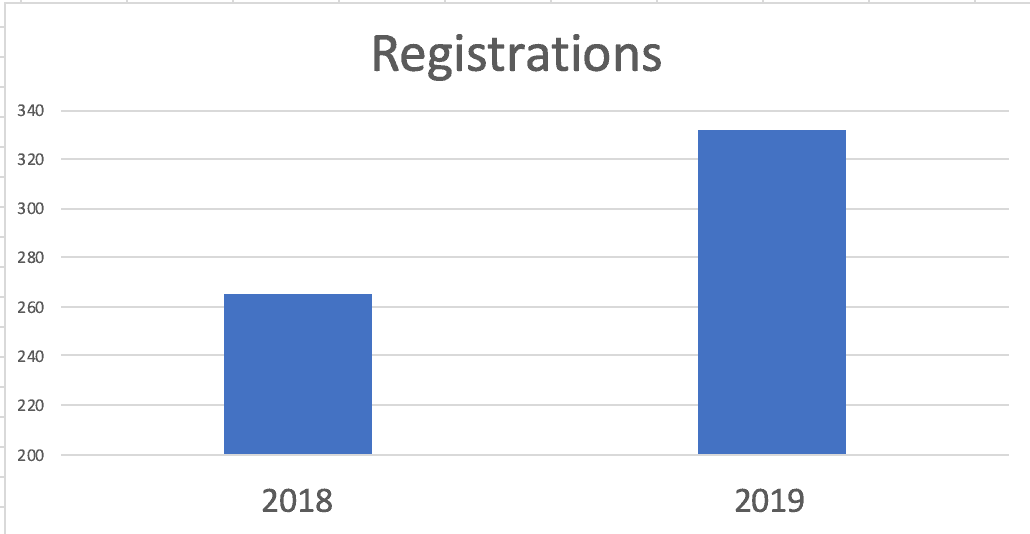

Oh, I could also report the increase as a column chart. One version might be a bit misleading due to the Y-axis:

I could add an arrow to emphasize the success story I'm trying to tell:

Do you see charts like that on metrics boards, A3s, or conference poster presentations? Do two data points really help us evaluate things properly?

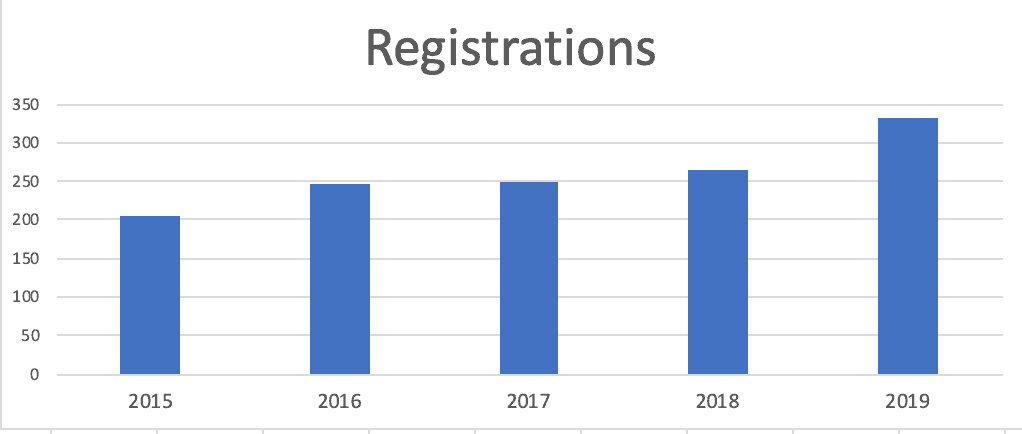

Or, a Y-axis that starts as zero might make the increase look less dramatic:

What if I made a column chart that included the averages of more years?

It's a fact to say:

“Webinar numbers increased for the fourth consecutive year.”

OK, so what? Is it constant growth? Or does 2019's performance and growth look different (better) than years past?

Again, we only have five data points, which makes it tough to know for sure.

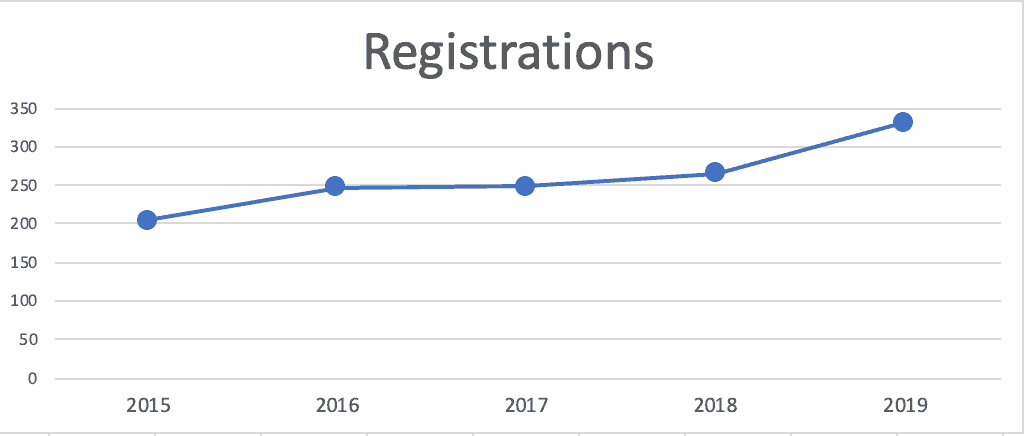

Why Line Charts Beat Columns for Time-Series Data

Here's a run chart of those five years — I prefer run charts (line charts) to column charts for time-series data:

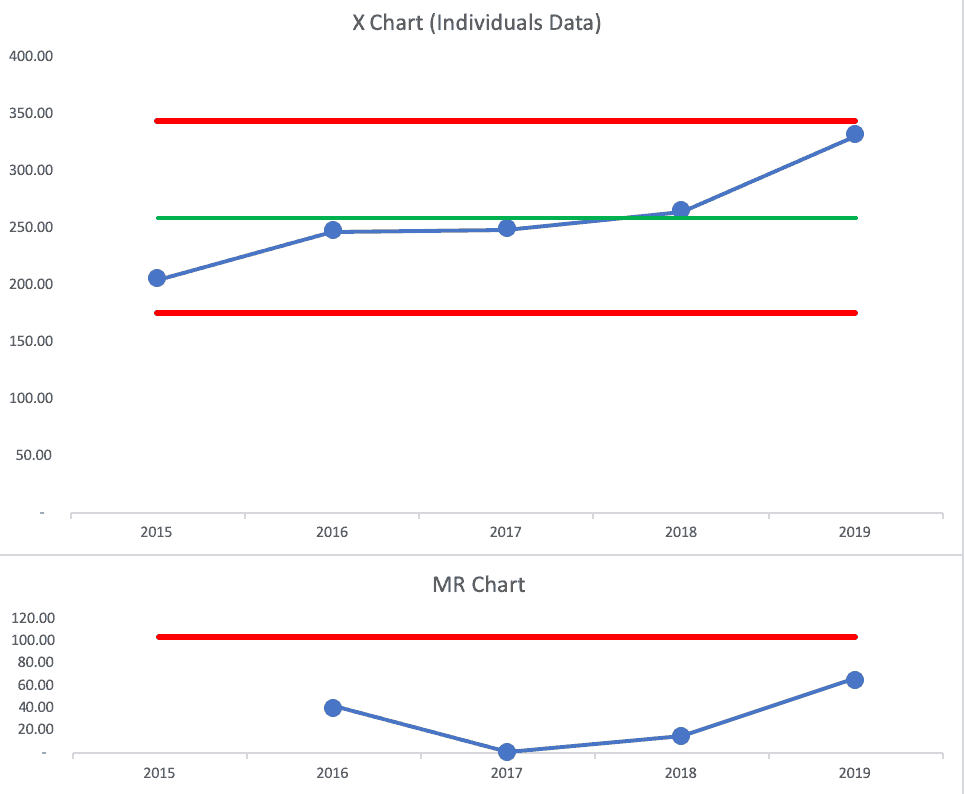

Applying Process Behavior Charts to Webinar Data

Now, this might be controversial to some, but we can make a “Process Behavior Chart” here, although I don't think it's the best approach to use just the five annual averages.

- It's possible to create a PBC with just five data points, although the Lower and Upper Limits won't be as valid as if we had 15 or 20 data points

- It's also OK to create a chart based on averages (read more here)

If we look at the X Chart and the MR Chart, we see that 2019's data point is not quite a “signal.”

Finding Real Signals in Individual Webinar Results

Since the Process Behavior Chart (aka the “XmR Chart”) is also known as the “Individuals Control Chart,” I think a better PBC would be one that plots each webinar as its own data point.

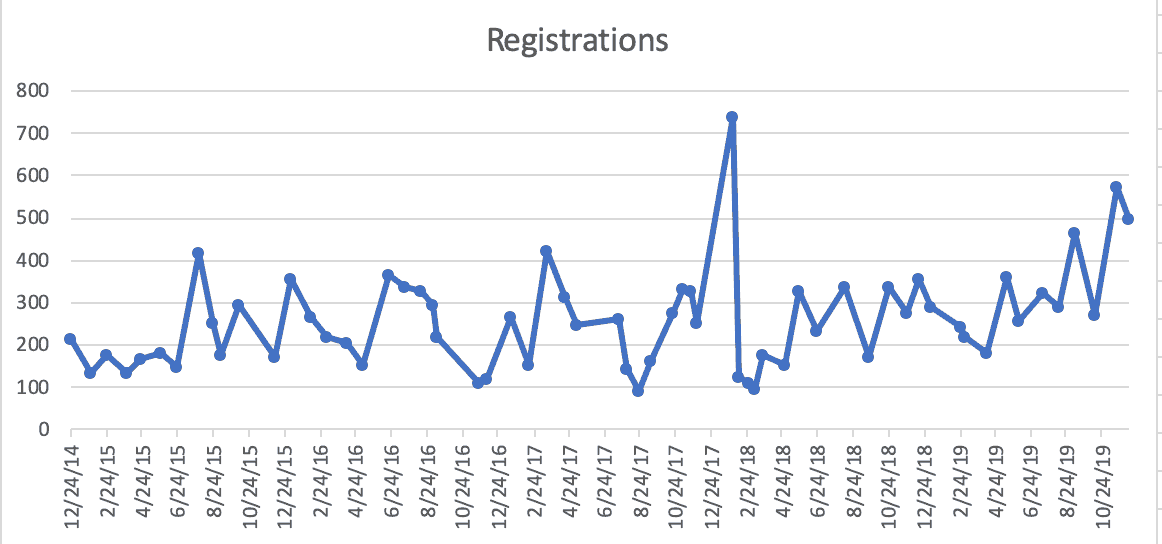

Here is that data, first, as a run chart (something that's helpful even without adding the average and limits):

Does it look like there's a trend? Fluctuation over time? There's what looks like an outlier in early 2018 (the highest number ever of 738).

A run chart is helpful, but a PBC is even better.

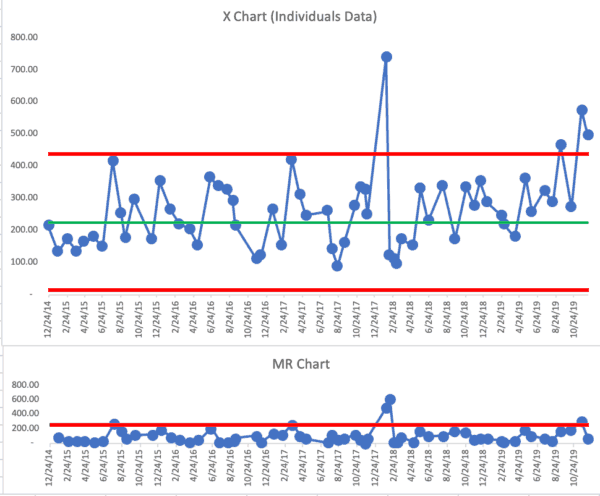

I track this data in the “control chart” functionality that's part of the KaiNexus system (note, this is functionality that's currently being improved and expanded upon for future releases):

From a baseline time period, the chart has a calculated average of about 250 and an Upper Limit of just over 500.

The chart shows three “signals”:

- January 2018 webinar is above the upper limit

- November 2019 webinar is also above the upper limit

- Three of the last four webinars in 2019 are closer to the upper limit than they are to the average

We almost have a run of eight consecutive webinars with above-average registrations. It seems like the number of registrations is no longer simply fluctuating around that average. It's close to showing a sustained upward shift… or at least there are signals that show the system isn't just predictably fluctuating there.

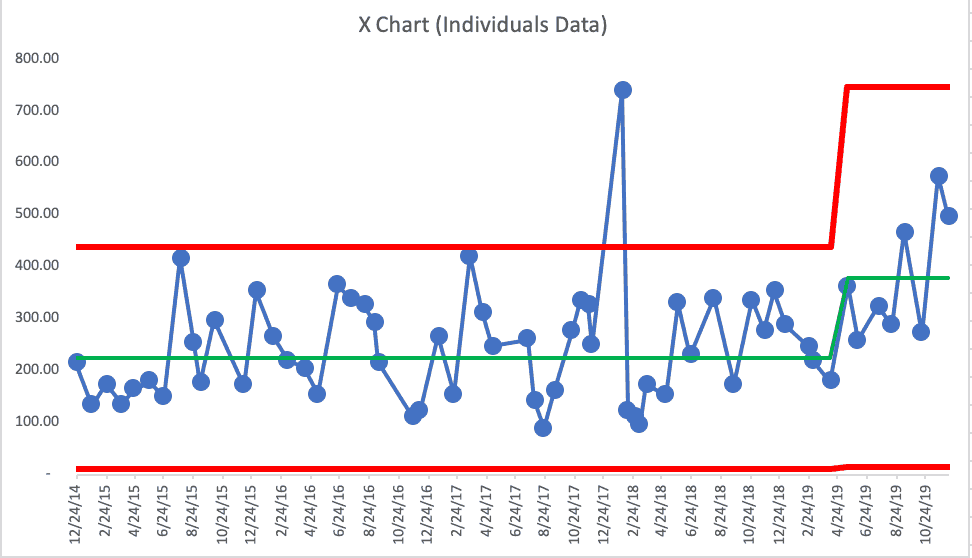

Here's how the PBC looks in the Excel template I normally use. I'm using the first 24 data points as the baseline for calculating the average and the limits. This is apparently different than the control chart in KaiNexus, as the average is 227 and the upper limit is 439.

This shows that three of the last four webinars exceeded the upper limit. We also see eight in a row above the upper limit.

I'm not trying to selectively choose a baseline period that would make a stronger case. I think my original baseline average used fewer initial data points, and there was really no reason to revise the average and limits unless I saw a signal.

Since I see this sustained increase — proof that 2019 webinar registrations are higher than the past in a meaningful way, I could shift the average and the limits.

In the past, I would have predicted that webinars would get about 250 registrations and probably no more than 500.

Getting 500 registrations is meaningful because that's when we start seeing more than 100 people trying to sign in live. The limit on our GoToWebinar account is 100, so we risk angering people because they can't get in. Bumping up to the next tier of the GoToWebinar subscription costs more. But maybe that's just a cost of growth, and it's worth it to not anger customers and prospects.

Here is the revised X Chart:

Using Process Behavior Charts to Predict and Plan

One purpose of a Process Behavior Chart is to predict future performance. Going forward into 2020, I'd predict that the average webinar registration is likely to be about 380 and the upper limit is about 750. We'll use this data to have a discussion about upgrading our GoToWebinar account.

There's where I think data like this is helpful in understanding trends or shifts in performance (or finding outliers). Again, we're not using this data to evaluate, reward, or punish me at KaiNexus… and that's OK.

What Better Webinar Metrics Actually Enable

When we stop reacting to isolated numbers and start looking at systems over time, the conversation changes. Process Behavior Charts help us ask better questions:

- Has the system actually changed?

- Are we seeing improvement–or just noise?

- What decisions does this data justify right now?

For webinars, that means smarter capacity planning, clearer investment decisions, and less temptation to game metrics for appearances. More importantly, it reinforces a culture where learning matters more than chasing vanity numbers.

If you want to go deeper into this approach, I explore these ideas extensively in Measures of Success: React Less, Lead Better, Improve More–including practical examples of using Process Behavior Charts in business settings.

![GM Wrote It Down in 1987. They Still Didn’t Get It. [Lean Coffee Talk] lean coffee talk nummi](https://www.leanblog.org/wp-content/uploads/2026/05/lean-coffee-talk-nummi-100x75.jpg)