TL;DR: Four data points can make almost any metric look better–or worse. Without enough context, run charts and trend lines can mislead leaders into seeing improvement where none exists. Process Behavior Charts help distinguish real system change from routine variation.

Why Four Data Points Can Be Misleading

Let's say you have a performance metric where a lower number is better, such as:

- manufacturing product defects

- the number of patients who get an infection

- customer complaints

- the number of SaaS software customers who don't renew

Leaders are often asked to judge improvement based on a small number of data points. But how many points are enough to tell us anything meaningful?

I see a lot of cases where a company shows just a few data points. I saw some charts recently that showed four data points as a “run chart.” It's better to see a graph than a table of numbers, but is four data points enough to prove anything?

Here's a table of numbers, below. The last month is better than the first. Are we improving?

| Month 1 | 13 |

| Month 2 | 9 |

| Month 3 | 12 |

| Month 4 | 7 |

When a Short Run Chart Makes Improvement Look Real

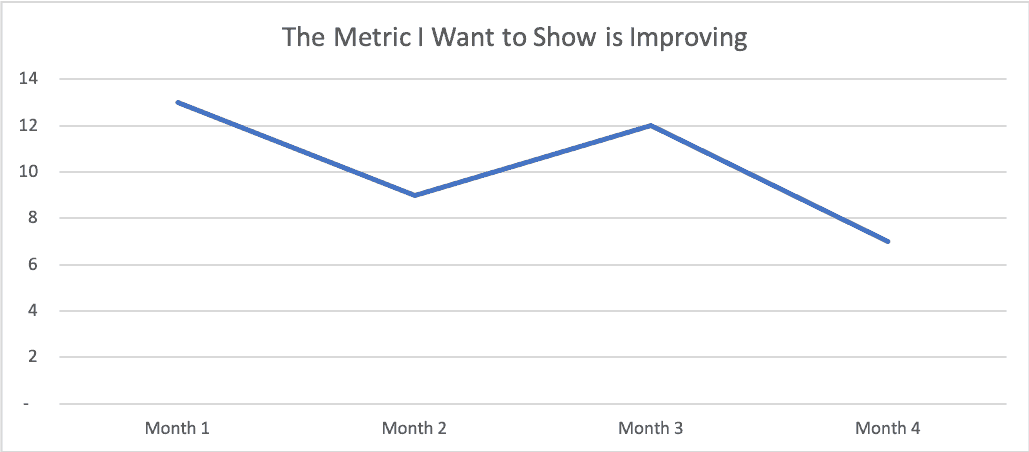

Here's a short run chart… what does this imply visually?

Wow, that's almost 50% improvement from the first point to the fourth. If I'm a consultant, I'd say this proves my effectiveness. If I were running an internal Lean Six Sigma project, I might show this as proof that my project was a success and that you should certify me for whatever “Belt” color I'm being trained for.

All right, end of story… good talk.

But wait…

Adding Context Changes the Story

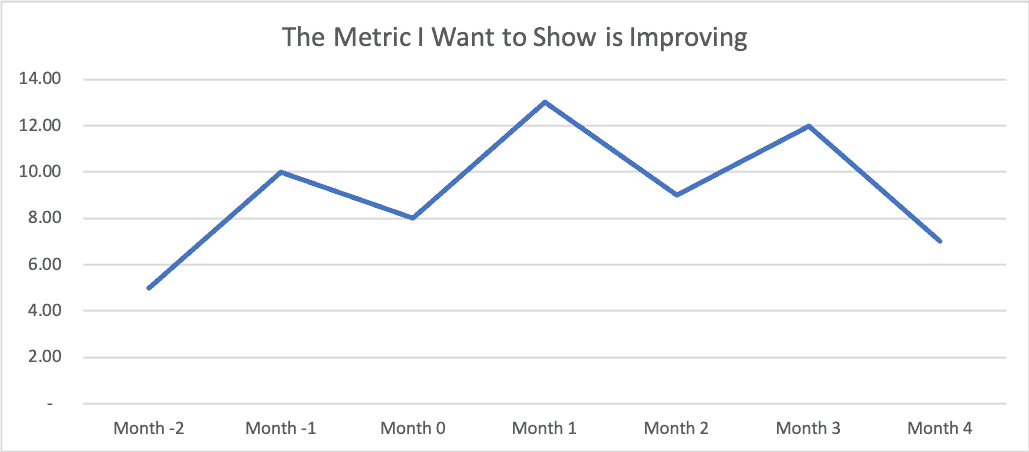

What happens if we pull data from the previous three months to show more historical context?

Uh oh. the metric used to be lower… (a value of 5 in “Month -2”) and then it went up… and then it went down. Did our consulting work or our Lean Sigma project really have the effect that we wanted? Is the “voice of the process” telling the story that we hoped it would tell?

Is this metric just fluctuating around an average? Maybe our intervention wasn't really an improvement after all? Maybe it had no effect? Or it had no significant effect?

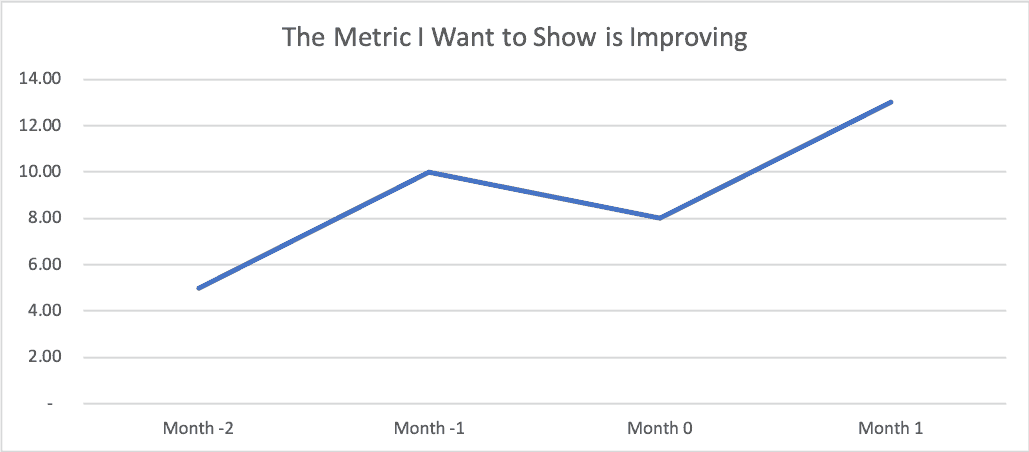

If I wanted to emphasize something like, “Look at the huge problem we were facing” as a way of setting up our later “success,” we might choose to show these four data points… look the metric is clearly getting worse from these four data points, right?

I think that when we see the “four data points” (or some limited comparison of data), it's more often or not a case where people are intentionally manipulating data (“lies, damned lies, and charts”). We just have to be careful that we don't draw bad conclusions from limited data (data that is lacking context).

The Red Bead Experiment Shows Why This Happens

Each of these sets of four data points are taken from a more complete data set of 24 points from when I ran and facilitated my version of the famed Deming Red Bead Experiment during a team learning event at the offices of the firm Value Capture in Pittsburgh on Wednesday (I sometimes do consulting subcontract work with them and their healthcare clients).

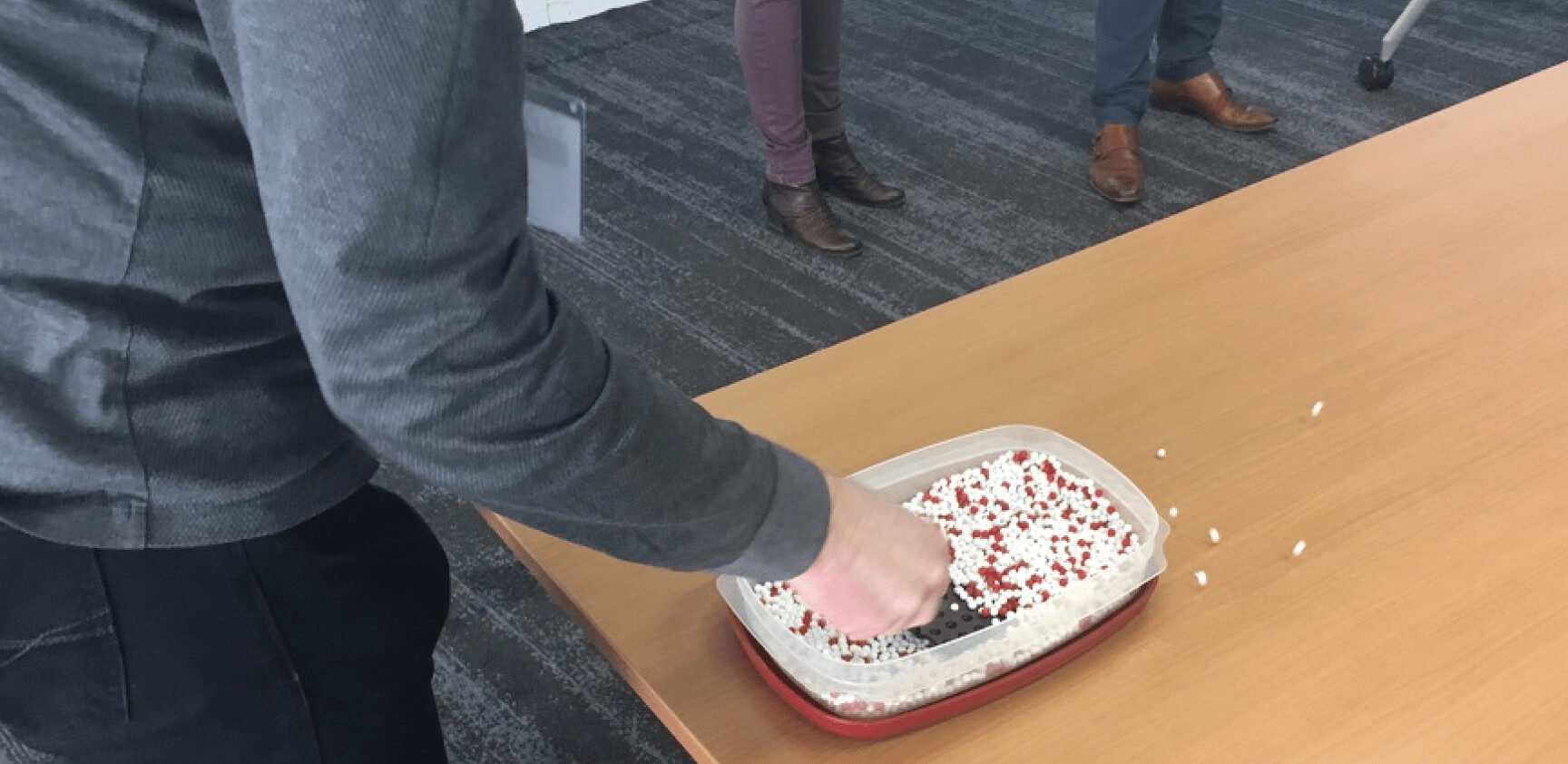

As you might know, one punch line of the Red Bead Experiment (aka the Red Bead Game) is that the same consistent “process” will produce variable results. Here is a photo from a workshop I ran last year in Belgium where we played the game:

In the game, different workers take turns dipping a paddle with 50 holes into the bead box. The red beads are considered defects in the process. Clearly, people are not being set up to succeed here.

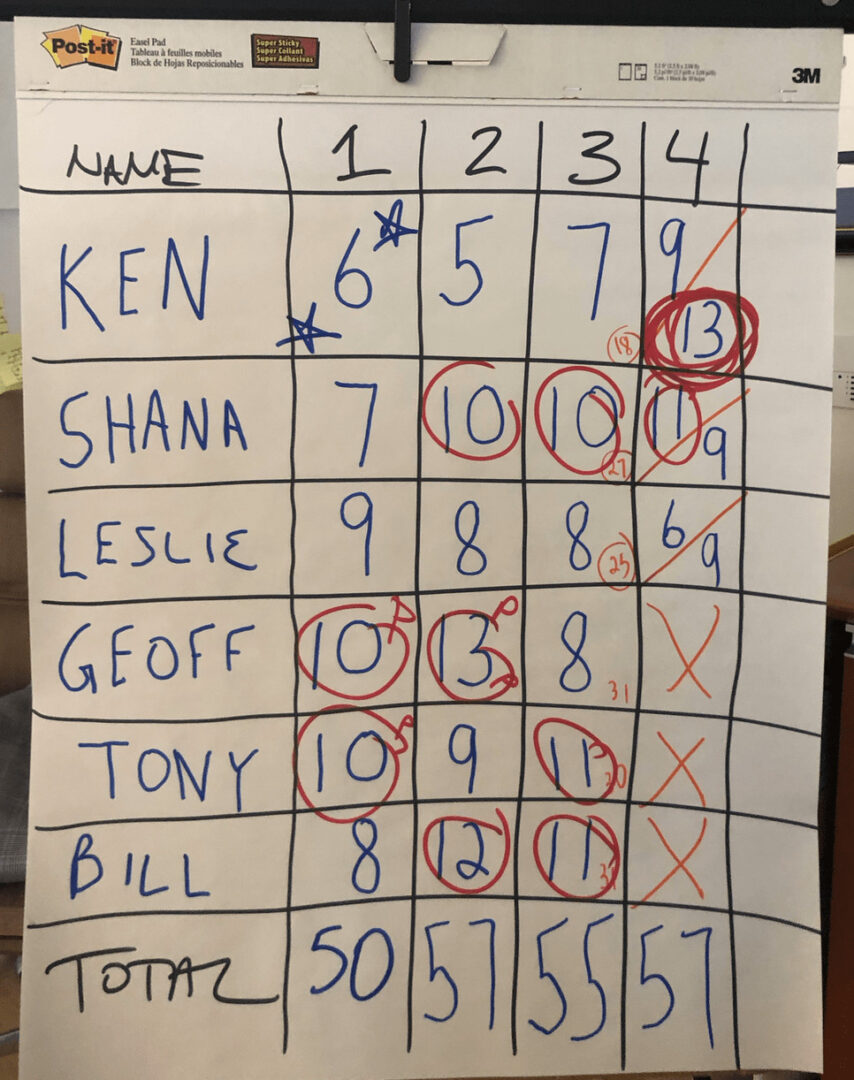

Here is the table of numbers or “scorecard” from the different draws of beads from the other day:

At the end of the game, I circled the numbers that were worse than average. In the role playing of the game, I was browbeating those who drew a big number, rewarded and praised those who drew fewer “defect” beads (red beads)… it's all a sham, since each number is basically a random result of the system.

Every system produces the results that it's designed to produce. That's true, as is the idea that every process and metric will have variation… it's just a question of how much variation is routinely occuring.

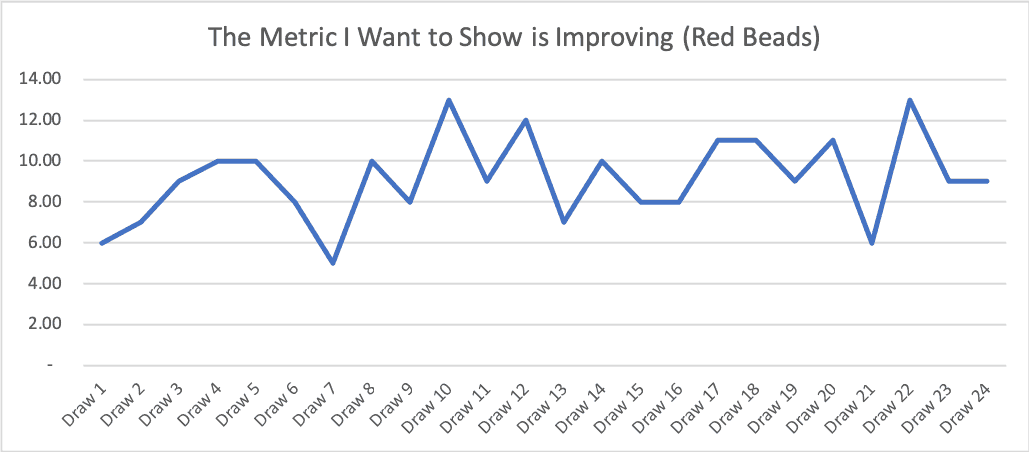

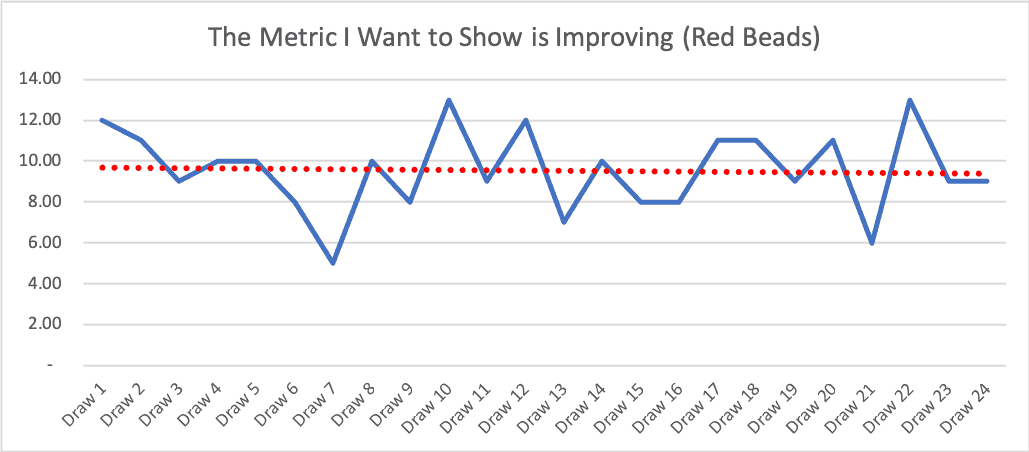

Each of those short run charts that I showed earlier in the post come from a subset of the Red Bead data. If we plot all 24 data points as a run chart, we see this:

Is that a metric that's improving? No… I think it's more clearly fluctuating around an average over time.

Why Linear Trend Lines Can Be Deceptive

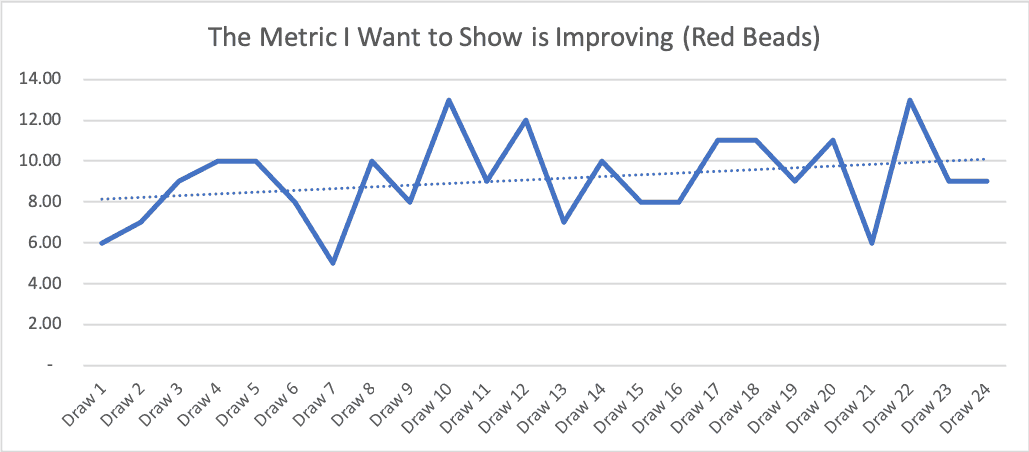

Here's a case where we have to be careful with “linear trend lines” (something that's easy to add with two clicks in Excel). Look what happens (see the dashed blue line):

The linear trend line implies things are getting worse. But if we keep playing the Red Bead Game, the number of red beads won't trend upward like that. The linear trend line is influenced too heavily by the fact that the first few draws in this running of the game on Wednesday happened to be below average.

Let's say the first few draws had been slightly above average (which is quite possible), then we'd see a downward-sloping linear trend line, which would be equally misleading:

What a Process Behavior Chart Shows Instead

What's less misleading is a “Process Behavior Chart,” as I explain in my book Measures of Success. Also see this explanation of the method I created for KaiNexus.

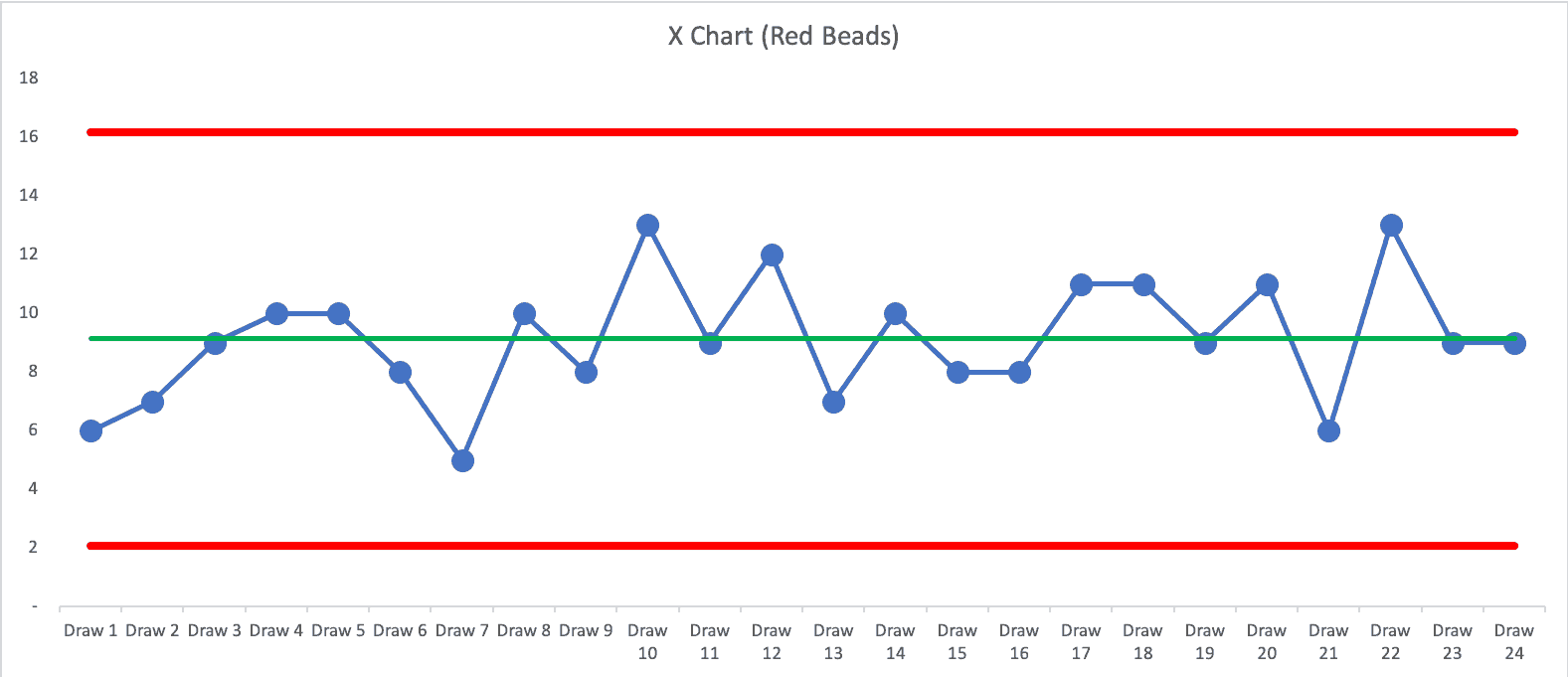

Here is a PBC for Wednesday's running of the beads:

The PBC shows a metric that's “predictable.” That's not surprising since the Red Bead process is predictable. Predictable doesn't mean “zero variation.” In this running of the game, the average number of red beads was 9.125. We calculated the lower and upper “natural process limits” to be 2.07 and 16.18.

The PBC tells us that if we keep drawing beads, we'll expect between 3 and 16. When I offered an incentive program reward for anybody who drew three red beads or fewer, that's possible… but the incentive can't influence results (unless somebody cheats in the game).

Each of my earlier four-data-point run charts can be found in the PBC. “Month 1” in the charts is “Draw 10” from the middle of the game. Any four data points might give the appearance of improvement, when it's really just part of the longer-term fluctuation. The appearance of improvement might lead to flawed cause-and-effect analysis, which hampers our improvement efforts.

This is why leaders need better ways to interpret metrics–so improvement decisions are based on evidence, not coincidence.

What's my conclusion? Using just a few data points can be misleading, even if we draw a run chart. We need to be careful that we don't allow ourselves to be fooled by a few data points (or a linear trend line). We should also be ethical in not misleading others with data. When in doubt, look at more data and more context. Don't just show one data point from before your change, with three data points after the change. It's really hard to draw good conclusions from four data points… when in doubt, “plot the dots” and “show me the data.”

How Leaders Can Interpret Metrics Without Being Misled

If this post resonates, these ideas are explored in much more depth in my book Measures of Success: React Less, Lead Better, Improve More. The book walks through how to use Process Behavior Charts to interpret metrics correctly, avoid being misled by short-term fluctuation, and focus improvement efforts on real system change–not coincidence.

It's written for leaders and improvement professionals who want fewer knee-jerk reactions to data, better questions about cause and effect, and more confidence that their improvement work is actually making a difference.

If you're serious about improving performance–not just explaining numbers–Measures of Success provides the practical framework to do exactly that.

FAQ: Interpreting Metrics, Run Charts, and Small Data Sets

Are four data points enough to show real improvement?

No. Four data points can easily create the appearance of improvement (or decline), even when the underlying system hasn't changed at all. They don't provide enough context to distinguish real change from routine variation.

Why do short run charts often mislead leaders?

Short run charts exaggerate randomness. With too few data points, normal fluctuation can look like a trend, leading leaders to draw incorrect cause-and-effect conclusions.

Is a trend line reliable with limited data?

No. Linear trend lines are especially misleading with small data sets. They are heavily influenced by where the first few points fall and can suggest improvement or deterioration that won't persist.

What problem does the Red Bead Experiment demonstrate?

The Red Bead Experiment shows that:

- A stable process produces variation

- Individual results are largely random

- Praising or blaming people for short-term results is misguided

It highlights why judging performance based on a few data points is fundamentally flawed.

What's the difference between a run chart and a Process Behavior Chart?

A run chart simply plots data over time.

A Process Behavior Chart (PBC) adds:

- An average (center line)

- Statistically derived upper and lower natural process limits

This allows leaders to see whether a change is signal or noise.

How many data points are needed for a Process Behavior Chart?

While more data is better, a PBC can begin with as few as 8-12 points, improving in reliability as more data is added. The key is consistency and context–not cherry-picked before-and-after snapshots.

What does “predictable” mean in a Process Behavior Chart?

Predictable does not mean perfect or stable at a desired level.

It means the system is behaving consistently within expected limits, even if performance is poor.

Why is reacting to short-term improvement risky?

Reacting too quickly can:

- Reinforce false beliefs about what caused improvement

- Lead to wasted root cause analysis

- Encourage data manipulation

- Undermine trust and psychological safety

What should leaders do instead of reacting to four data points?

Leaders should:

- Look at more data and more context

- Use Process Behavior Charts

- Ask whether the system has truly changed

- Focus on improving the system, not explaining randomness

Where can I learn how to interpret metrics correctly?

The book Measures of Success: React Less, Lead Better, Improve More explains how to use Process Behavior Charts to avoid being misled by small samples, trends, and short-term fluctuations.