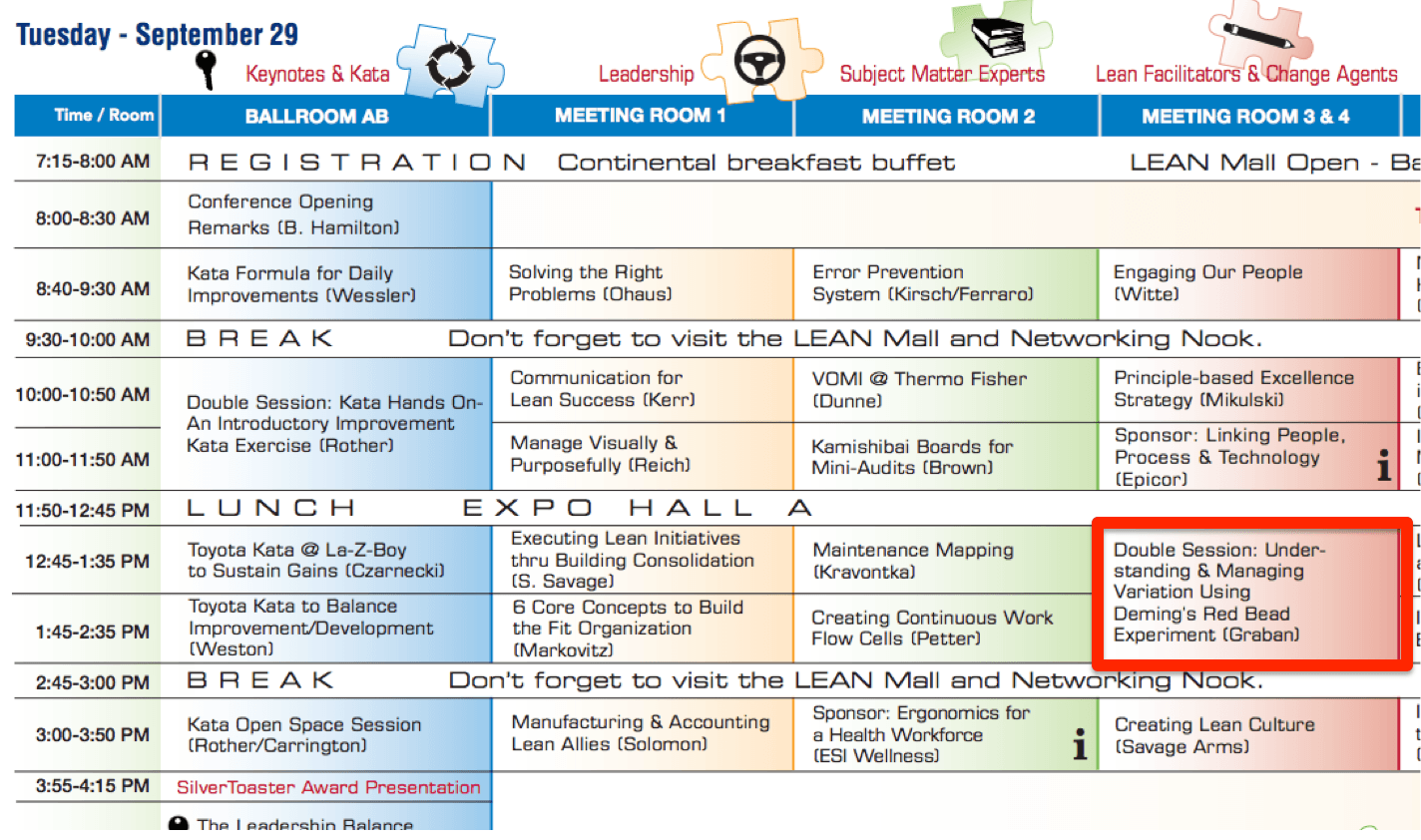

Later next month, at the Northeast L.E.A.N. Conference, I'm facilitating the famous “Red Bead Experiment” of W. Edwards Deming (sometimes referred to as the “Red Bead Game”). If you will be there, please join me on Tuesday, September 29. I'll also do a pre-conference workshop on Kaizen and daily continuous improvement on the afternoon of the 28th.

It's been a few years since I facilitated this, so I did a “dry run” on Monday for my local San Antonio “Lean Coffee” group, which included people from three different hospital systems and a former Toyota employee who now teaches business at Trinity University.

There are plenty of videos on YouTube for context, but here are two from the Deming Institute, one with an excerpt of the game itself and one with some lessons learned:

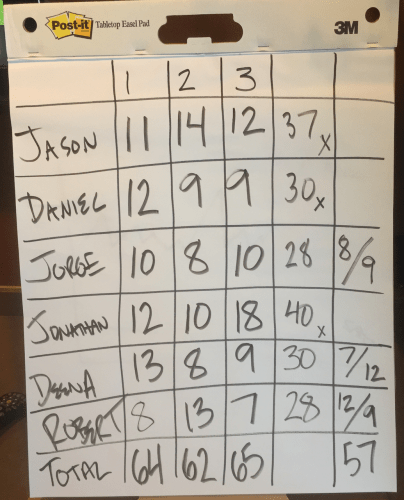

In our session, my “red bead” kit had 20% red beads. So, with a paddle holding 50 beads, you'd expect 10 red beads to be in each round of “production.”

The results for our six “willing workers”:

After the first round, I gave Robert and Jorge “employee of the month” certificates (which was, of course, the result of sheer luck). The results of the Red Bead game are basically chance… the product of the system.

In round two, Robert did worse (he “slacked off” after getting the award, or so a bad manager might think) while some who did the worst in round 1 now did better (Deena and Daniel)… it's regression to the mean.

After round two, I set a management target of 3 red beads (very unlikely) and I offered a $20 incentive bonus to anybody who could get only 3 red beads.

I was supervising them so they couldn't cheat.

In round 3, performance was no better with the goal and the incentive. The results are still the product of the system. We hadn't improved the system.

After round 3, I fired half of the willing workers and had the remaining three do double shifts in round 4.

The number of red beads went down to 57… which was, again, pure chance, but this might have inadvertently reinforced a lesson that firing the bottom performers leads to better performance. We should have done a round 5, which would have probably produced 63 beads or so.

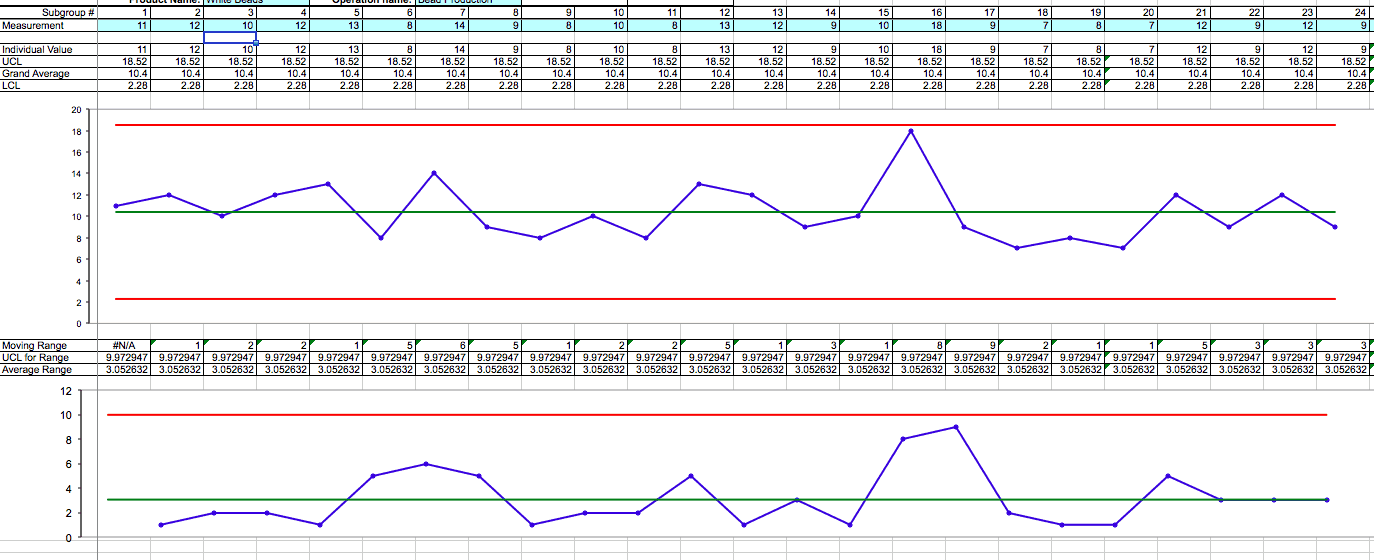

After the game, I produced a control chart that shows the Red Bead system is a stable system:

The top chart is the control chart for the # of red beads. The upper and lower control limits are about 18.5 and 2.25, meaning any single data point within that range is likely “noise” or due to “common cause” variation. Even the person who got 18 red beads didn't do anything different… it was just chance. Even hitting the goal of 3 red beads was possible, through chance. The bottom chart is an “MR-bar” chart showing the variation from round to round. You can also do a simpler chart where the standard deviation is the square root of the average and the UCL is 3xSD.

But, rather than diving into statistics, the lessons of the game are about management and how we react to a system.

We too often blame individuals for performance that's driven by the system. We overreact to every up and down or above and blow average data point. Things like incentives, threats, goals, and posters don't improve the system. It's impossible to “try harder” to make more white beads.

I was training the “willing workers” as part of a typical command and control system. I was being “the boss” and wasn't asking for input or ideas. It was interesting that nobody complained or spoke up to ask about improving the system, such as sorting out the red beads. I didn't create an environment where it was safe to speak up. One of the participants asked me why I didn't ask for input. I asked, “Why didn't you speak up?” That impasse can only be broken by management, I think.

In our debrief, we talked about lessons from the attendees, and they included:

It demonstrates that the process is flawed and that you have to engage people to improvement.

Management creates conditions where people can speak up.

Sometimes you can do everything according to procedures and still get defects.

The system is management's responsibility, but everybody can play a role in improvement.

The healthcare people in the room suggested that some problems are essentially “red beads,” including defects and harm like patient falls, hospital-acquired infections, and pressure ulcers. It's hard to think of harm as being somewhat randomly occurring, but I think it's true.

A nurse pointed out that YOU can do everything right, but your patient might still get an infection because others are not following proper protocols and you can't control that. It would be unfair to reward or punish nurses based on their patients' infection rates.

You might have two units where Unit A doesn't follow protocols properly, but still goes a week without a patient getting a pressure ulcer. Unit B might do everything properly and still have a patient with a pressure ulcer. Traditional management would only reward the results instead of managing the process. Which unit, A or B, did a better job?

These are the types of issues and questions that the Red Bead experiment raises for discussion.

Have you ever used this simulation in your organization? I'd be happy to come facilitate it for you as part of a session on Lean or systems improvement. If you can get a majority of your senior leadership team to actually attend and participate, I'll give you VERY good pricing. Let me know.

Please scroll down (or click) to post a comment. Connect with me on LinkedIn.

Let’s work together to build a culture of continuous improvement and psychological safety. If you're a leader looking to create lasting change—not just projects—I help organizations:

- Engage people at all levels in sustainable improvement

- Shift from fear of mistakes to learning from them

- Apply Lean thinking in practical, people-centered ways

Interested in coaching or a keynote talk? Let’s start a conversation.

![When Was the Last Time a Leader Around You Admitted They Were Wrong? [Poll]](https://www.leanblog.org/wp-content/uploads/2025/07/Lean-Blog-Post-Cover-Image-2025-07-01T212509.843-100x75.jpg)

Great article, Mark! I’ve been using the read bead experiment along with other lean/systems thinking methods at our behavioral health agency.

That’s great, Hal. Do people readily make the connections between the game and their work? Does it challenge their thinking in a good way? Do you get senior leaders involved?

There are also blog posts (that I wrote on The W. Edwards Institute blog) with additional commentary and links for those vides (links are also on Youtube but you don’t see that if you just watch them here).

http://blog.deming.org/2014/03/lessons-from-the-red-bead-experiment-with-dr-deming/

Thanks for adding that link, John!

[…] I’ve done before (and written about), I’ll be facilitating the famed “Deming Red Bead Experiment” on Thursday at the […]

[…] Running Dr. Deming’s Red Bead Experiment & the Implications for Healthcare […]